What Is Incrementality in Marketing? Definition, Measurement & Why It Matters in 2026

What Is Incrementality in Marketing? Definition, Measurement & Why It Matters in 2026

What Is Incrementality in Marketing? Definition, Measurement & Why It Matters in 2026

Lokeshwaran Magesh

Lokeshwaran Magesh

Lokeshwaran Magesh

6 Mins Read

6 Mins Read

6 Mins Read

Table of Contents

What is incrementality in marketing?

Why incrementality matters for performance marketers

Key components of an incrementality test

Incrementality vs attribution vs MMM

How incrementality works in practice

How to get started with incrementality testing

Three real incrementality examples

Frequently asked questions

Table of Contents

What is incrementality in marketing?

Why incrementality matters for performance marketers

Key components of an incrementality test

Incrementality vs attribution vs MMM

How incrementality works in practice

How to get started with incrementality testing

Three real incrementality examples

Frequently asked questions

Table of Contents

What is incrementality in marketing?

Why incrementality matters for performance marketers

Key components of an incrementality test

Incrementality vs attribution vs MMM

How incrementality works in practice

How to get started with incrementality testing

Three real incrementality examples

Frequently asked questions

Make Every Ad a Winner

Hooks, CTAs, visuals - decode every detail.

Incrementality in marketing is the additional impact, or lift, that a campaign generates beyond what would have happened without it. Marketers measure it with controlled experiments comparing a treatment group exposed to the campaign against a holdout group that is not.

Platform attribution tells you where conversions happened. Incrementality tells you which of those conversions you actually caused. The gap between the two is large, growing, and increasingly the difference between profitable scale and burned budget. In April 2026, with privacy regulation, AI-driven measurement, and Meta's Incremental Attribution feature reshaping the stack, incrementality has moved from a quarterly research project to a core operating metric.

What is incrementality in marketing?

Incrementality in marketing is the measurable lift that a campaign, channel, or creative produces above a baseline of what would have happened without it. The "baseline" represents the conversions a brand would have earned through organic demand, brand recognition, retargeting overlap, or other channels running in parallel. Incrementality strips out that baseline and isolates the true causal contribution of the marketing activity.

The simplest way to think about it: every conversion in your dashboard belongs to one of two buckets. Bucket one is conversions that happened because of your ad. Bucket two is conversions that would have happened anyway. Incrementality is the science of separating those buckets.

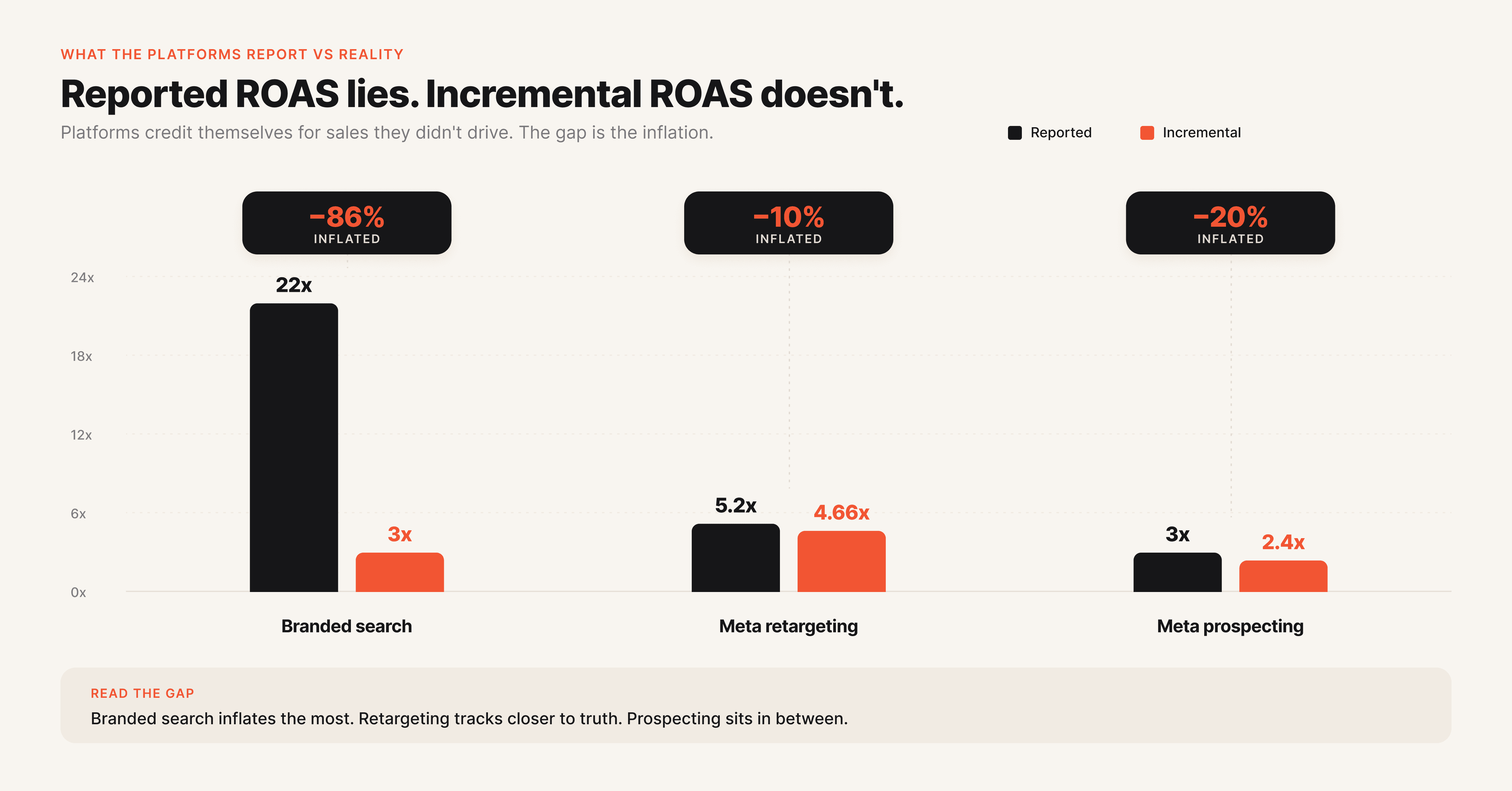

Attribution platforms like Meta Ads Manager and Google Ads cannot do this on their own because they only see users who were exposed to the ad. They have no view of what an unexposed version of the same user would have done. That blindspot is why platform-reported ROAS consistently overstates campaign value.

The technical definition relies on counterfactual reasoning. The "counterfactual" is the alternative reality in which your ad never ran. Since you cannot observe that reality directly, incrementality testing constructs it through controlled experiments. A treatment group sees the ad, a control or holdout group does not, and the difference between their conversion rates is the incremental lift.

This concept used to live with data scientists at large brands. It is now mainstream because of two forces. Privacy regulation has degraded the cookie and device ID signals that powered multi-touch attribution for a decade.

AI and automated experimentation tools have made incrementality testing accessible to mid-market brands that could not afford a dedicated measurement team. The result is a measurement culture where "did it work" matters more than "where did the click come from".

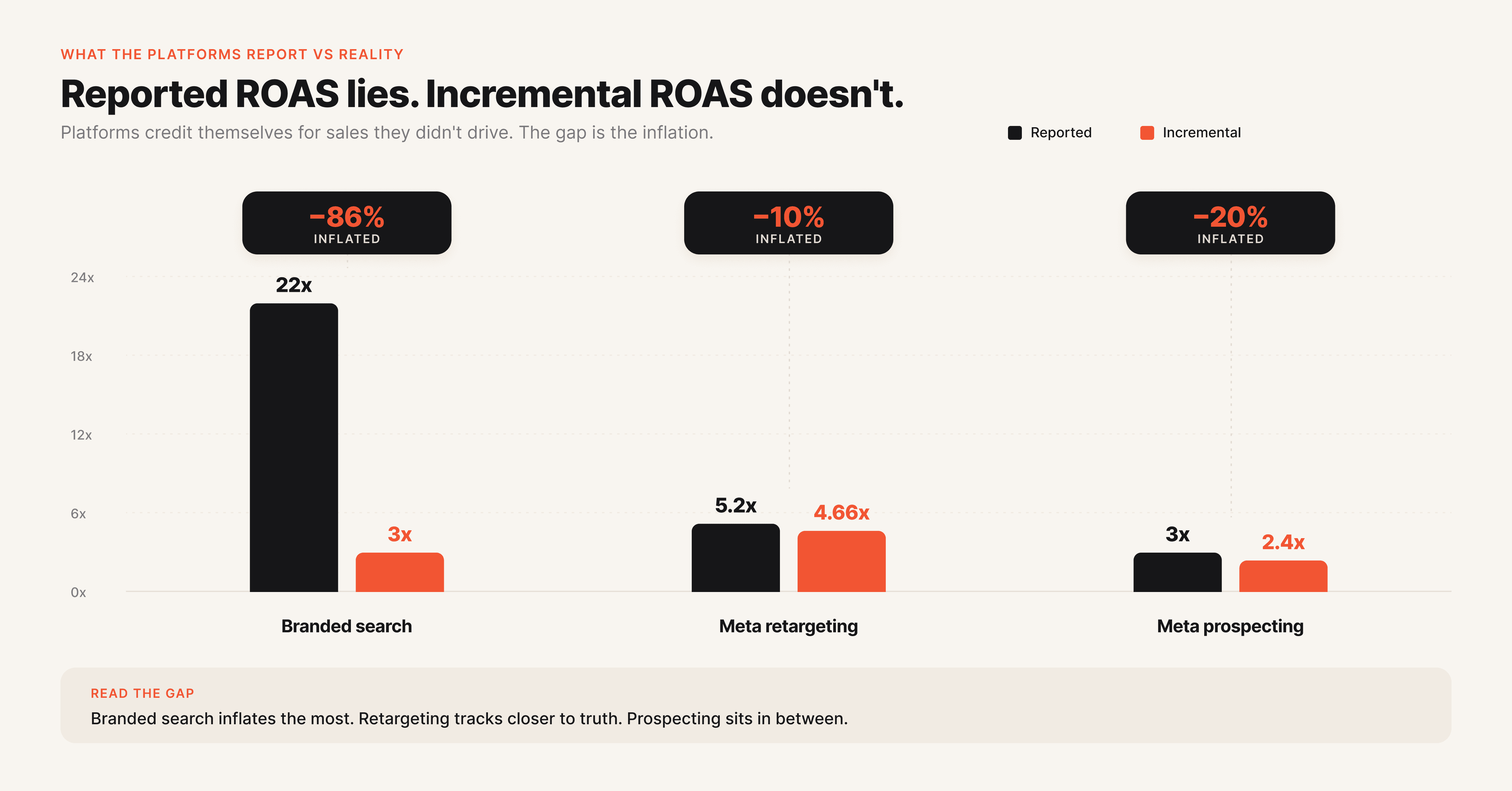

Why incrementality matters for performance marketers

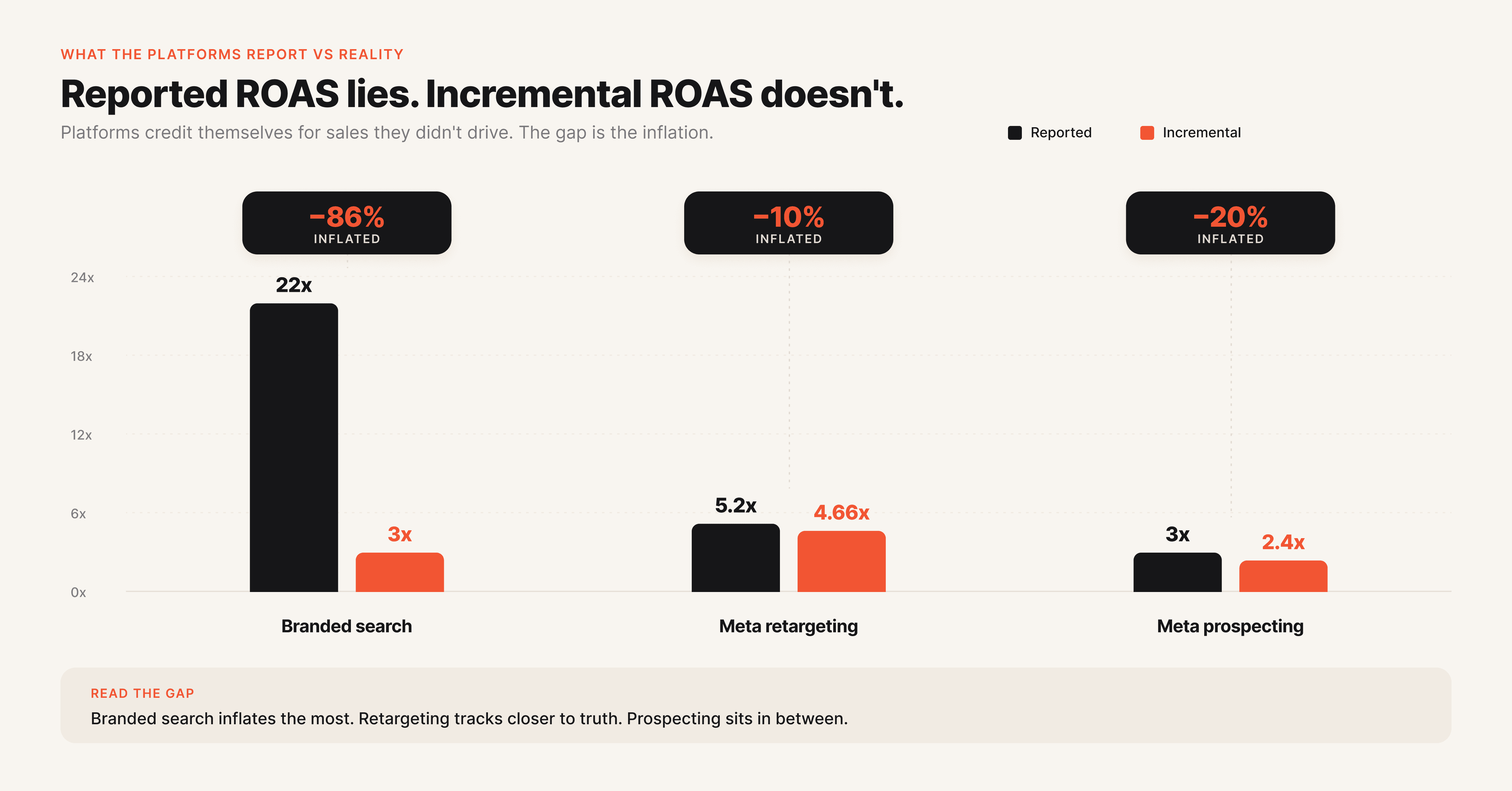

Incrementality matters because platform-reported ROAS systematically overstates the value of paid media. Research across DTC brands shows that incremental ROAS is typically 40 to 70 percent lower than reported ROAS for retargeting campaigns. For prospecting campaigns the gap narrows, with incremental ROAS landing closer to 70 to 90 percent of reported ROAS.

The takeaway is uncomfortable. A retargeting campaign showing 8x in Meta Ads Manager may be delivering closer to 3x in real, causal revenue.

That gap changes every budget decision a performance marketer makes. If a brand is scaling a channel based on inflated ROAS, it is overpaying for revenue it would have earned organically. If it is killing a top-of-funnel channel because attribution undercounts its impact, it is starving the pipeline. Without incrementality, optimization is happening on numbers that are wrong by a factor of two to five depending on the channel.

The 2026 measurement context makes this worse. Apple's privacy changes, Chrome's third-party cookie deprecation, and tightening consent rules have eroded the user-level signals attribution platforms depend on. Marketers who lean only on platform attribution are now optimizing against a signal that gets noisier every quarter. Incrementality, in contrast, runs on aggregate behavior and survives the privacy shift intact.

The shift is showing up in budget data. According to eMarketer's 2026 measurement trends report, almost half of US marketers (46.9 percent) plan to invest more in marketing mix modeling over the next year, and 36.2 percent are planning to invest more in incrementality methodology over the same period.

More than half (52 percent) of US brand and agency marketers already use incrementality testing as part of their measurement stack. Incrementality is no longer optional. It is the backbone of credible performance reporting, alongside core metrics like CPL, CTR, and conversion rate.

Key components of an incrementality test

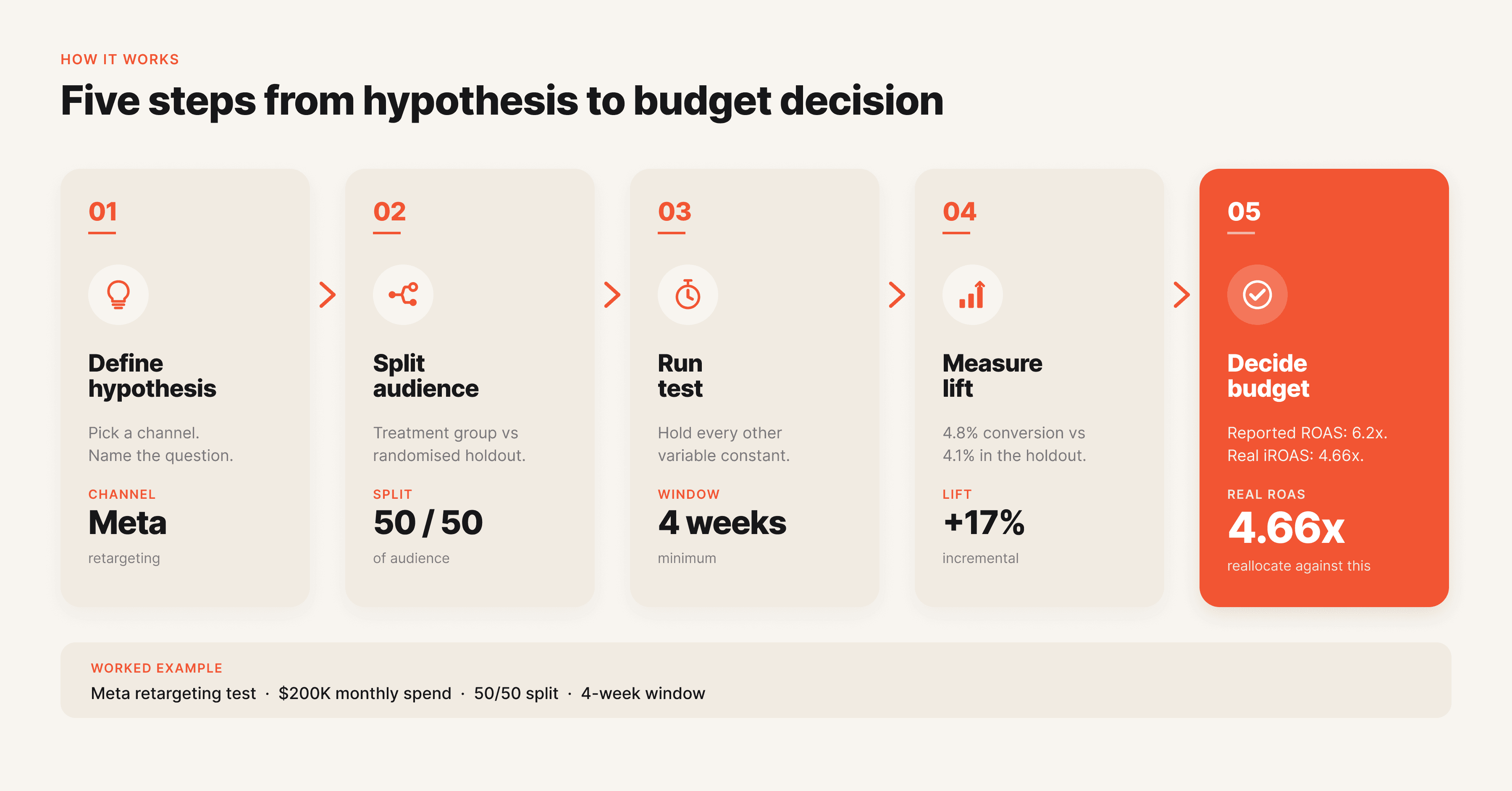

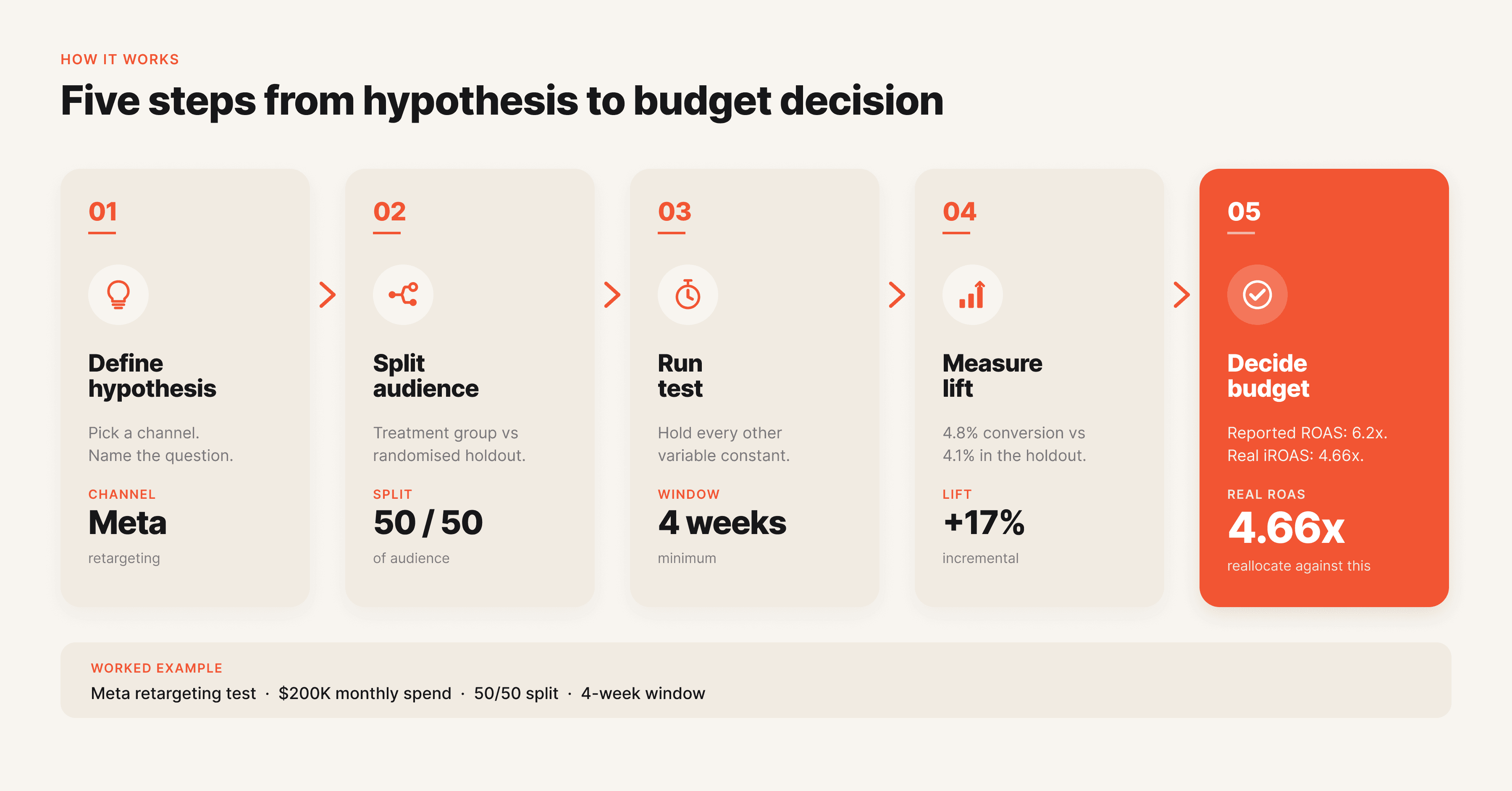

Every incrementality test, regardless of method, shares the same five components. Understanding each is the difference between a test that produces a clean answer and one that wastes a month of budget on inconclusive results.

Component | What it does | Common failure mode |

|---|---|---|

Hypothesis | States the specific lift you expect to measure | Vague hypotheses ("does this work?") produce vague results |

Treatment group | The audience or geo exposed to the campaign | Group is too small to detect lift |

Control or holdout group | The matched audience or geo that is not exposed | Group is contaminated by other channels |

Test duration | The window over which lift is measured | Too short for the sales cycle, results are statistically insignificant |

Statistical significance threshold | The confidence level required before acting on results | Acting on directional but non-significant lift |

The hypothesis sets the test up. A clean hypothesis names the channel, the metric, the expected lift, and the action you will take if the lift hits. Example: "Pausing branded search in Boston for 4 weeks will reduce branded conversions by less than 10 percent, indicating low incrementality and supporting a 30 percent budget cut nationally." Without that specificity the test cannot fail or succeed cleanly.

The treatment and control groups are the heart of the test. They must be statistically comparable on every dimension that matters: audience size, demographics, baseline conversion rate, seasonality exposure, and parallel channel activity. The gold standard is randomized assignment at the user, geo, or audience level. Where randomization is not possible, synthetic control methods construct a comparable counterfactual using historical data.

Test duration must match the conversion window. A campaign with a 14-day consideration cycle needs at least four weeks of data to capture the full lift. Statistical significance is the final gate.

A test that reports a 12 percent lift with 60 percent confidence is not a result. It is noise. Marketers need 90 percent or 95 percent confidence before reallocating budget against the finding.

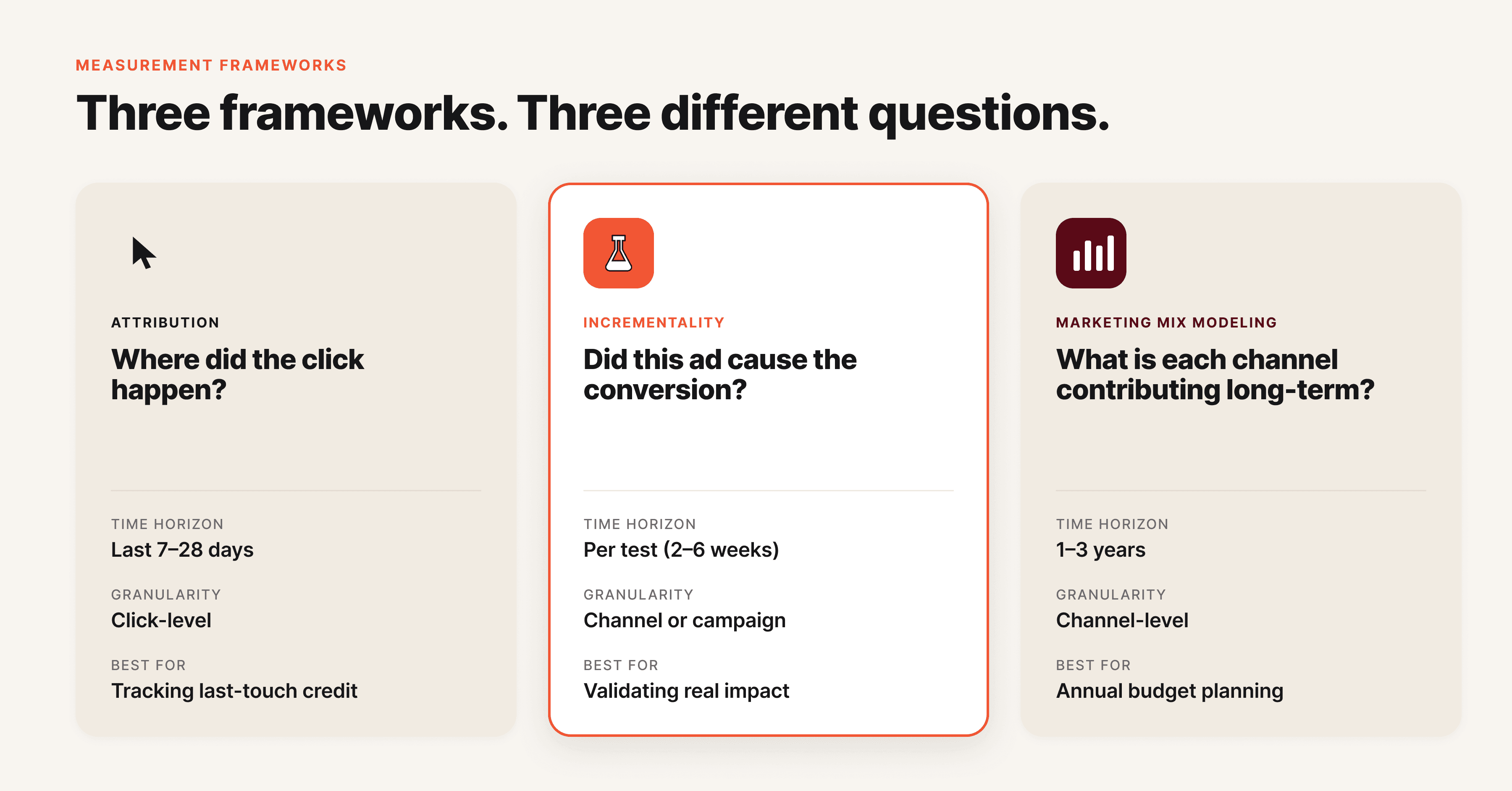

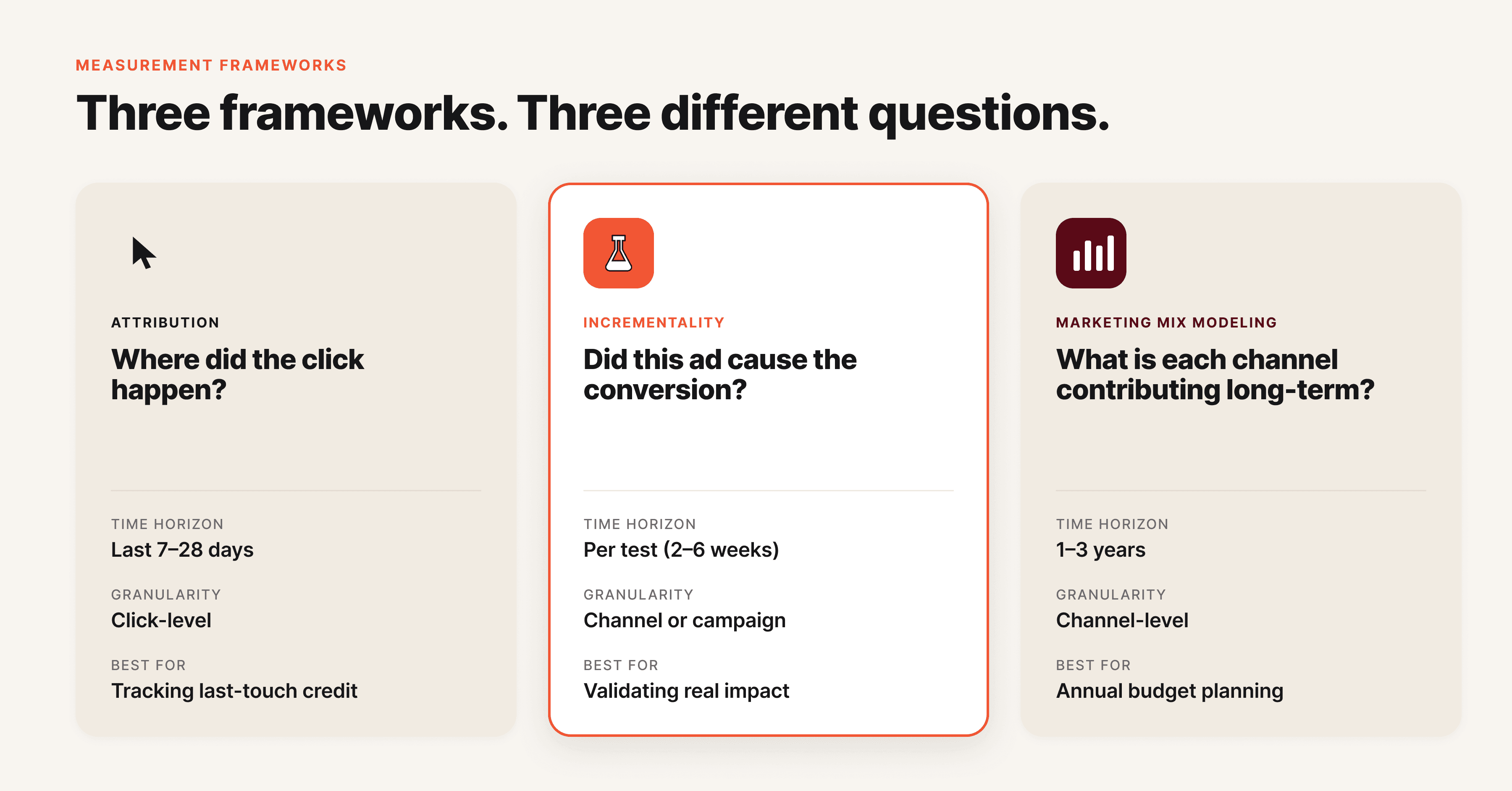

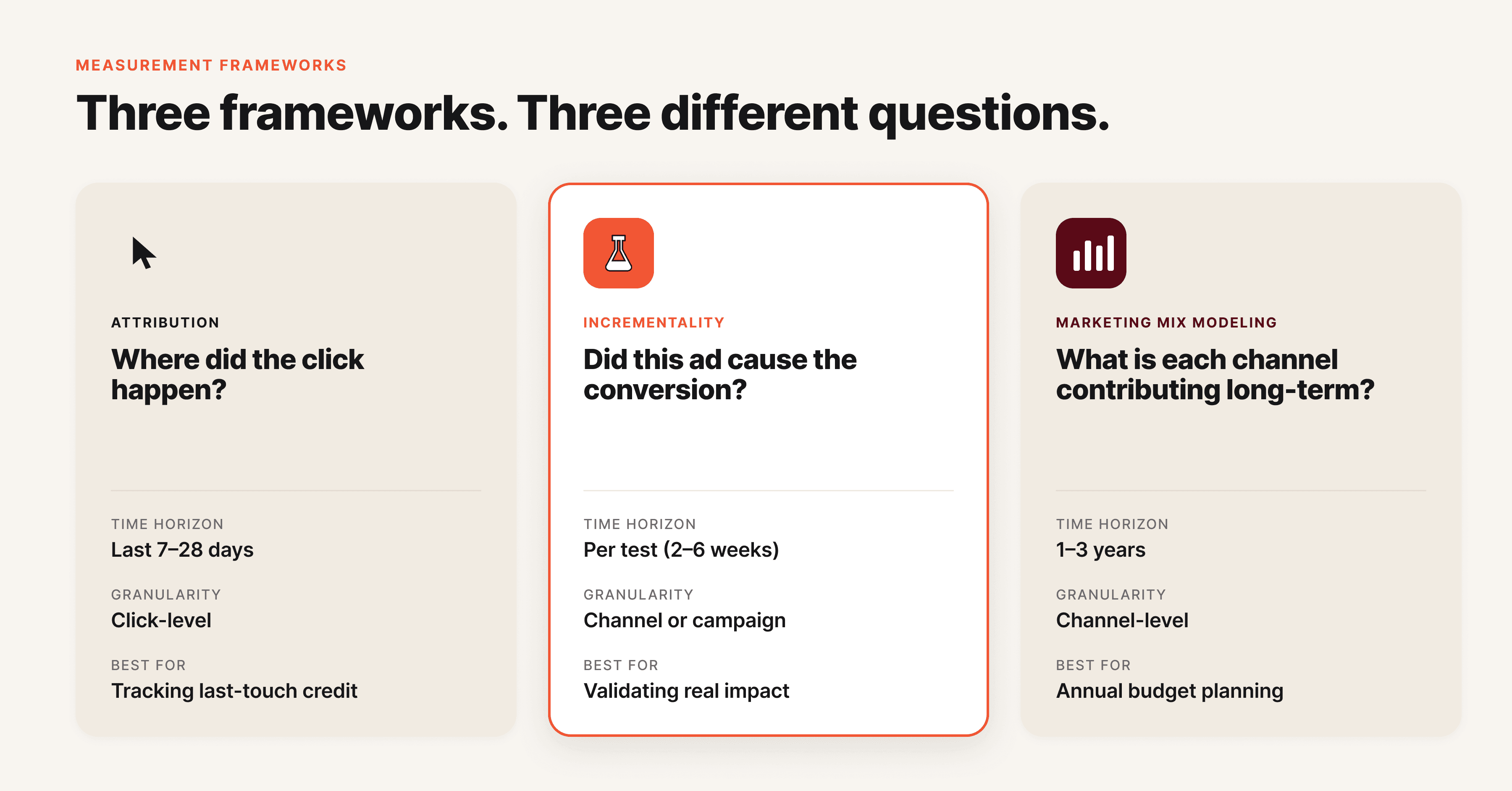

Incrementality vs attribution vs MMM

The three measurement frameworks answer different questions and work best when used together. Confusing them is one of the most common reasons measurement programs fail. Measured's incrementality vs MMM explainer lays out the differences in detail.

Framework | Question it answers | Time horizon | Granularity | Best for |

|---|---|---|---|---|

Attribution | Where did the click happen? | Real-time to 30 days | Campaign, ad set, ad, creative | Tactical optimization, daily decisions |

Incrementality | Did this campaign cause the conversion? | 2 to 12 weeks | Channel or campaign | Validating attribution, channel ROI |

Marketing Mix Modeling (MMM) | What is the long-term contribution of every channel and external factor? | 1 to 3 years of historical data | Channel, market, season | Budget planning, brand investment |

Attribution is fast but unreliable. It tells you the path the user took to convert, but it cannot tell you whether your ad caused the conversion or just got credit for one that was already going to happen. Multi-touch attribution made this worse by giving every touchpoint partial credit, including touchpoints that had no causal influence at all. For a deep look at how Meta's attribution model evolved, see Hawky's guide on mastering Meta's new attribution model.

Incrementality is rigorous but slow. A geo-based holdout test takes four to twelve weeks to produce a clean answer for one channel. You cannot run an incrementality test on every campaign every week. Incrementality earns its place by validating or rebutting what attribution tells you, not by replacing it.

MMM is the strategic frame. It takes 1 to 3 years of historical performance data and decomposes the impact of every channel, including channels you cannot directly measure (TV, OOH, podcast, organic). In 2026 MMM has become the default measurement framework for privacy-regulated industries because it requires no cookies, device IDs, or consent signals. Modern MMM is also AI-powered and integrates incrementality test results to calibrate the model.

The 2026 best practice is the unified measurement stack. MMM provides the top-down view of channel contribution. Incrementality calibrates the model and validates specific channels.

Attribution informs day-to-day tactical decisions inside the platforms. Each layer answers a different question. None of them works as well alone.

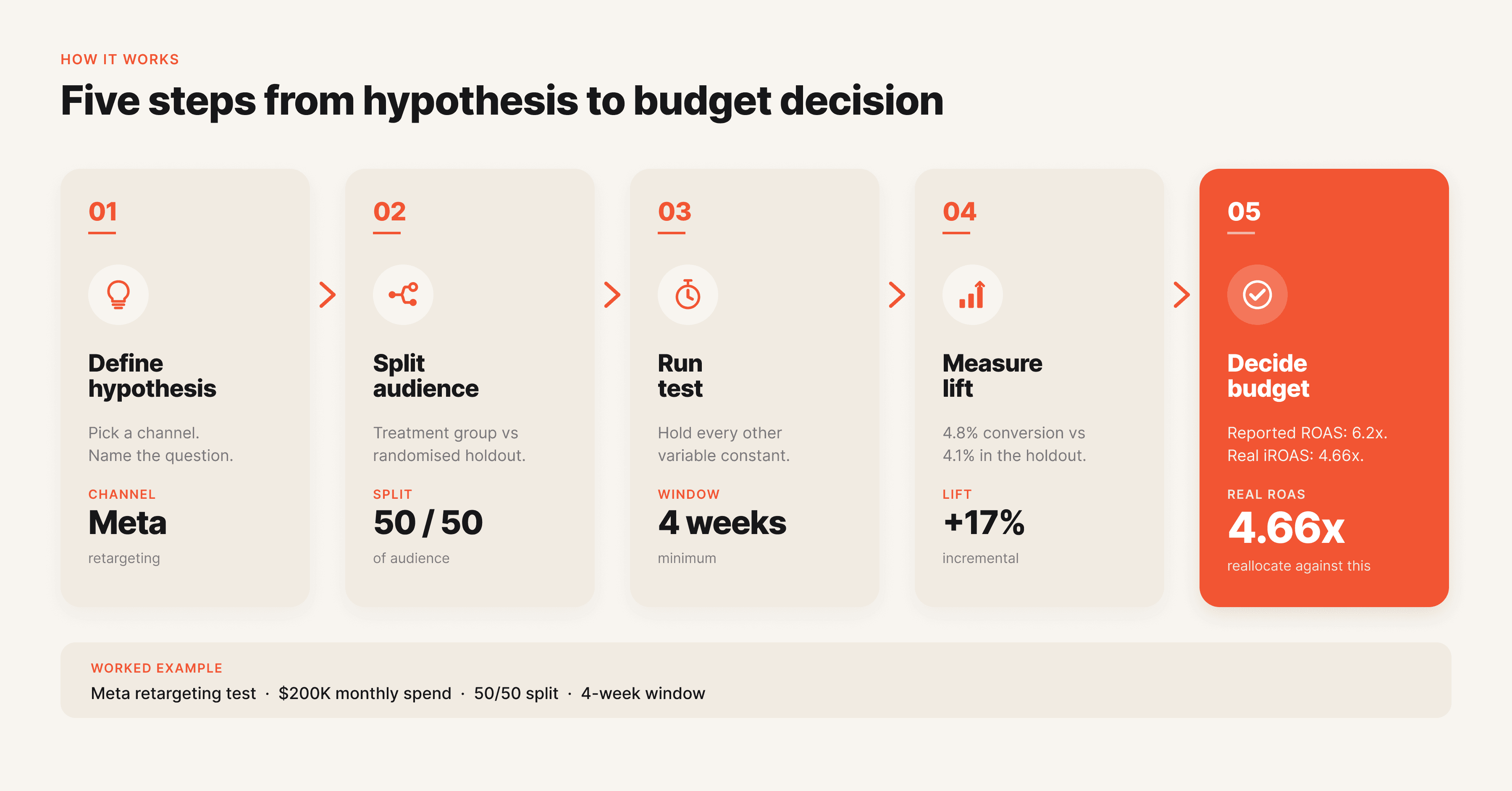

How incrementality works in practice

Picture a DTC apparel brand spending 200,000 dollars a month across Meta, Google, and TikTok. The performance team sees a 5.2x ROAS on Meta retargeting in Ads Manager. The CFO wants to scale Meta retargeting by 50 percent. Before approving, the team runs an incrementality test.

They split the customer email list into two matched audiences of 100,000 users each. The treatment group is included in the Meta retargeting custom audience. The holdout group is excluded for four weeks. Everything else stays constant: prospecting budgets, creative refreshes, email cadence, and Google Ads spend.

At the end of the four weeks, the team measures the conversion rate in each group.

The treatment group converted at 4.8 percent. The holdout group converted at 4.1 percent. The incremental lift is (4.8 minus 4.1) divided by 4.1, which equals 17 percent.

Multiplied across the 100,000 users in the treatment group at an average order value of 80 dollars, the campaign generated 56,000 dollars in incremental revenue against 12,000 dollars in spend. Incremental ROAS is 4.66x, not the 5.2x reported in Ads Manager. Close enough that scaling makes sense, but the team now knows the real number.

That is one channel, one campaign, one month. Repeated across the channel mix, the same methodology produces a brand-level map of which campaigns drive incremental revenue and which are reporting credit for revenue the brand would have earned anyway. Brands that build this discipline into their planning cycle compound the advantage every quarter.

Creative incrementality follows the same logic at a different layer. A new creative variant is "incremental" if it lifts performance above what existing creatives in the rotation would have delivered alone. If it just cannibalizes impressions from older winners, it adds nothing. This is also closely tied to creative fatigue, where ads decline in performance and need replacement with truly incremental variants.

AI-driven platforms like Hawky's element-level creative analysis prove their value by identifying which creative elements actually move the needle versus which are simply taking credit for impressions that were already converting.

How to get started with incrementality testing

You do not need a measurement team or a seven-figure budget to start. A 90-day plan, the right channel, and a clean test design will give you your first credible incrementality result.

Step 1: Pick your highest-spend, highest-suspicion channel. Branded search, retargeting, and Meta lookalike audiences are the three channels where reported ROAS most commonly diverges from incremental ROAS. Start where the gap is most likely to be large. The bigger the suspected gap, the more valuable the answer.

Step 2: Choose your test method. For brands with geo-distributed sales, use a geo-based holdout (turn off the channel in 5 to 10 matched markets and compare against control markets). For brands with strong audience targeting, use an audience-based holdout (split a custom audience and exclude one half). For channels with limited targeting (TV, podcast, CTV), use synthetic controls or matched-market designs. Google's incrementality testing documentation covers method selection in depth.

Step 3: Define hypothesis, duration, and success criteria upfront. Write down the expected lift, the confidence threshold, and the action you will take based on the result. If the test result is X, you will do Y. This commitment prevents post-hoc rationalization when the numbers are inconvenient.

Step 4: Run the test cleanly. Do not change anything mid-test: no creative refreshes, no budget shifts, no audience expansion. Contamination kills results. For a deeper view on creative-level testing discipline, see Hawky's guide on creative performance analysis.

Step 5: Act on the result. A test that does not change a budget decision is a wasted test. Reallocate based on the finding, then design the next test. Incrementality is a discipline, not a one-time project.

For brands building a unified 2026 measurement stack, layer in MMM after running 2 to 3 incrementality tests. The MMM model uses incrementality results as ground truth to calibrate channel-level coefficients. To pair incrementality testing with element-level performance visibility, see Hawky's Command Center.

Three real incrementality examples

Example 1: Branded search. A SaaS brand spent 40,000 dollars a month on branded search and reported a 22x ROAS in Google Ads. The team ran a 4-week geo holdout, pausing branded search in 8 mid-sized markets while keeping it on in matched control markets. Conversions in holdout markets dropped by 11 percent, not the 95 percent platform attribution implied. Incremental ROAS was closer to 3x. The brand cut branded search spend by 60 percent and reinvested in prospecting, recovering the 11 percent through paid social.

Example 2: Meta retargeting. A D2C beauty brand suspected its retargeting was over-credited. It split its 250,000-user retargeting audience in half, excluding the holdout from the campaign for 5 weeks. Incremental lift came in at 14 percent, against a reported ROAS that had implied a 60 percent contribution. The team kept retargeting but cut the budget by 40 percent and shifted spend to prospecting on Meta Advantage+, which had been undercrediting itself in attribution.

Example 3: Creative incrementality. A subscription fitness app launched a new video creative that, in attribution, looked like a top-3 performer in the account. An A/B test rotating the new creative against a control set of three winning evergreens revealed something different. The new creative was cannibalizing 70 percent of its conversions from the existing winners.

True incremental lift was only 4 percent. The team kept the creative in low rotation and shifted production budget toward variants of the existing winners, which were proven incremental drivers. This is the kind of insight that platforms with element-level creative analysis surface automatically.

Frequently asked questions

What is the difference between incrementality and attribution?

Attribution assigns credit for a conversion to a specific touchpoint based on the user journey. Incrementality measures whether the touchpoint actually caused the conversion or whether it would have happened anyway. Attribution answers "where did the credit go?" while incrementality answers "did this ad cause this sale?". The two often disagree, and incrementality is the more rigorous answer.

What is incremental ROAS?

Incremental ROAS is the return on ad spend calculated only on the revenue a campaign caused, excluding revenue that would have happened without it. It is calculated by dividing incremental revenue (revenue from the treatment group minus revenue from the control group) by total media spend. Incremental ROAS is typically 40 to 70 percent lower than platform-reported ROAS for retargeting campaigns.

How long does an incrementality test take?

A typical incrementality test runs 4 to 12 weeks. The exact duration depends on the channel, the conversion cycle, and the sample size needed for statistical significance. Tests shorter than 4 weeks rarely produce reliable results. Tests longer than 12 weeks are vulnerable to seasonal contamination and competitor activity.

Is incrementality testing the same as A/B testing?

No. A/B testing compares two creative or campaign variants against each other to see which performs better. Incrementality testing compares an exposed group against a non-exposed group to see whether the campaign drove any lift at all. A/B testing optimizes within a channel, while incrementality validates whether the channel is worth running.

Can incrementality testing work without holding back budget?

Not for true causal incrementality. Every credible method (geo holdout, audience holdout, ghost ads, synthetic controls) requires either withholding the campaign from a comparable audience or constructing a counterfactual from historical data. Methods that claim incrementality without any form of holdout are typically attribution rebadged, not true causal measurement.

What is a good incremental lift?

A "good" lift depends entirely on the channel and the cost. Incremental lift of 5 to 15 percent on a low-cost retargeting campaign can be highly profitable. Lift of 30 percent on an expensive prospecting channel may not be. The right benchmark is incremental ROAS against the brand's profit margin, not lift in isolation.

Closing

Incrementality is the difference between optimizing on what feels true and optimizing on what is true. Brands running paid media at scale in 2026 cannot afford to operate on platform-reported ROAS alone. The numbers are inflated, the inflation varies by channel, and the budget decisions made on top of them compound the error.

The path forward is not complicated. Pick a channel, design a clean test, run it for four weeks, and act on the result. Build the discipline test by test, then layer in MMM as the strategic frame. The brands doing this work today are the ones that will compound budget efficiency every quarter while their competitors keep scaling channels that look profitable on paper.

If creative incrementality is the layer you want to crack next, Hawky's element-level creative intelligence is built for that job. It identifies which hooks, visuals, and CTAs actually drive incremental performance versus which are simply riding traffic, cutting the guesswork out of creative rotation and budget allocation.

Ready to Stop Guessing and Start Winning with Creative Intelligence? Book Demo

Incrementality in marketing is the additional impact, or lift, that a campaign generates beyond what would have happened without it. Marketers measure it with controlled experiments comparing a treatment group exposed to the campaign against a holdout group that is not.

Platform attribution tells you where conversions happened. Incrementality tells you which of those conversions you actually caused. The gap between the two is large, growing, and increasingly the difference between profitable scale and burned budget. In April 2026, with privacy regulation, AI-driven measurement, and Meta's Incremental Attribution feature reshaping the stack, incrementality has moved from a quarterly research project to a core operating metric.

What is incrementality in marketing?

Incrementality in marketing is the measurable lift that a campaign, channel, or creative produces above a baseline of what would have happened without it. The "baseline" represents the conversions a brand would have earned through organic demand, brand recognition, retargeting overlap, or other channels running in parallel. Incrementality strips out that baseline and isolates the true causal contribution of the marketing activity.

The simplest way to think about it: every conversion in your dashboard belongs to one of two buckets. Bucket one is conversions that happened because of your ad. Bucket two is conversions that would have happened anyway. Incrementality is the science of separating those buckets.

Attribution platforms like Meta Ads Manager and Google Ads cannot do this on their own because they only see users who were exposed to the ad. They have no view of what an unexposed version of the same user would have done. That blindspot is why platform-reported ROAS consistently overstates campaign value.

The technical definition relies on counterfactual reasoning. The "counterfactual" is the alternative reality in which your ad never ran. Since you cannot observe that reality directly, incrementality testing constructs it through controlled experiments. A treatment group sees the ad, a control or holdout group does not, and the difference between their conversion rates is the incremental lift.

This concept used to live with data scientists at large brands. It is now mainstream because of two forces. Privacy regulation has degraded the cookie and device ID signals that powered multi-touch attribution for a decade.

AI and automated experimentation tools have made incrementality testing accessible to mid-market brands that could not afford a dedicated measurement team. The result is a measurement culture where "did it work" matters more than "where did the click come from".

Why incrementality matters for performance marketers

Incrementality matters because platform-reported ROAS systematically overstates the value of paid media. Research across DTC brands shows that incremental ROAS is typically 40 to 70 percent lower than reported ROAS for retargeting campaigns. For prospecting campaigns the gap narrows, with incremental ROAS landing closer to 70 to 90 percent of reported ROAS.

The takeaway is uncomfortable. A retargeting campaign showing 8x in Meta Ads Manager may be delivering closer to 3x in real, causal revenue.

That gap changes every budget decision a performance marketer makes. If a brand is scaling a channel based on inflated ROAS, it is overpaying for revenue it would have earned organically. If it is killing a top-of-funnel channel because attribution undercounts its impact, it is starving the pipeline. Without incrementality, optimization is happening on numbers that are wrong by a factor of two to five depending on the channel.

The 2026 measurement context makes this worse. Apple's privacy changes, Chrome's third-party cookie deprecation, and tightening consent rules have eroded the user-level signals attribution platforms depend on. Marketers who lean only on platform attribution are now optimizing against a signal that gets noisier every quarter. Incrementality, in contrast, runs on aggregate behavior and survives the privacy shift intact.

The shift is showing up in budget data. According to eMarketer's 2026 measurement trends report, almost half of US marketers (46.9 percent) plan to invest more in marketing mix modeling over the next year, and 36.2 percent are planning to invest more in incrementality methodology over the same period.

More than half (52 percent) of US brand and agency marketers already use incrementality testing as part of their measurement stack. Incrementality is no longer optional. It is the backbone of credible performance reporting, alongside core metrics like CPL, CTR, and conversion rate.

Key components of an incrementality test

Every incrementality test, regardless of method, shares the same five components. Understanding each is the difference between a test that produces a clean answer and one that wastes a month of budget on inconclusive results.

Component | What it does | Common failure mode |

|---|---|---|

Hypothesis | States the specific lift you expect to measure | Vague hypotheses ("does this work?") produce vague results |

Treatment group | The audience or geo exposed to the campaign | Group is too small to detect lift |

Control or holdout group | The matched audience or geo that is not exposed | Group is contaminated by other channels |

Test duration | The window over which lift is measured | Too short for the sales cycle, results are statistically insignificant |

Statistical significance threshold | The confidence level required before acting on results | Acting on directional but non-significant lift |

The hypothesis sets the test up. A clean hypothesis names the channel, the metric, the expected lift, and the action you will take if the lift hits. Example: "Pausing branded search in Boston for 4 weeks will reduce branded conversions by less than 10 percent, indicating low incrementality and supporting a 30 percent budget cut nationally." Without that specificity the test cannot fail or succeed cleanly.

The treatment and control groups are the heart of the test. They must be statistically comparable on every dimension that matters: audience size, demographics, baseline conversion rate, seasonality exposure, and parallel channel activity. The gold standard is randomized assignment at the user, geo, or audience level. Where randomization is not possible, synthetic control methods construct a comparable counterfactual using historical data.

Test duration must match the conversion window. A campaign with a 14-day consideration cycle needs at least four weeks of data to capture the full lift. Statistical significance is the final gate.

A test that reports a 12 percent lift with 60 percent confidence is not a result. It is noise. Marketers need 90 percent or 95 percent confidence before reallocating budget against the finding.

Incrementality vs attribution vs MMM

The three measurement frameworks answer different questions and work best when used together. Confusing them is one of the most common reasons measurement programs fail. Measured's incrementality vs MMM explainer lays out the differences in detail.

Framework | Question it answers | Time horizon | Granularity | Best for |

|---|---|---|---|---|

Attribution | Where did the click happen? | Real-time to 30 days | Campaign, ad set, ad, creative | Tactical optimization, daily decisions |

Incrementality | Did this campaign cause the conversion? | 2 to 12 weeks | Channel or campaign | Validating attribution, channel ROI |

Marketing Mix Modeling (MMM) | What is the long-term contribution of every channel and external factor? | 1 to 3 years of historical data | Channel, market, season | Budget planning, brand investment |

Attribution is fast but unreliable. It tells you the path the user took to convert, but it cannot tell you whether your ad caused the conversion or just got credit for one that was already going to happen. Multi-touch attribution made this worse by giving every touchpoint partial credit, including touchpoints that had no causal influence at all. For a deep look at how Meta's attribution model evolved, see Hawky's guide on mastering Meta's new attribution model.

Incrementality is rigorous but slow. A geo-based holdout test takes four to twelve weeks to produce a clean answer for one channel. You cannot run an incrementality test on every campaign every week. Incrementality earns its place by validating or rebutting what attribution tells you, not by replacing it.

MMM is the strategic frame. It takes 1 to 3 years of historical performance data and decomposes the impact of every channel, including channels you cannot directly measure (TV, OOH, podcast, organic). In 2026 MMM has become the default measurement framework for privacy-regulated industries because it requires no cookies, device IDs, or consent signals. Modern MMM is also AI-powered and integrates incrementality test results to calibrate the model.

The 2026 best practice is the unified measurement stack. MMM provides the top-down view of channel contribution. Incrementality calibrates the model and validates specific channels.

Attribution informs day-to-day tactical decisions inside the platforms. Each layer answers a different question. None of them works as well alone.

How incrementality works in practice

Picture a DTC apparel brand spending 200,000 dollars a month across Meta, Google, and TikTok. The performance team sees a 5.2x ROAS on Meta retargeting in Ads Manager. The CFO wants to scale Meta retargeting by 50 percent. Before approving, the team runs an incrementality test.

They split the customer email list into two matched audiences of 100,000 users each. The treatment group is included in the Meta retargeting custom audience. The holdout group is excluded for four weeks. Everything else stays constant: prospecting budgets, creative refreshes, email cadence, and Google Ads spend.

At the end of the four weeks, the team measures the conversion rate in each group.

The treatment group converted at 4.8 percent. The holdout group converted at 4.1 percent. The incremental lift is (4.8 minus 4.1) divided by 4.1, which equals 17 percent.

Multiplied across the 100,000 users in the treatment group at an average order value of 80 dollars, the campaign generated 56,000 dollars in incremental revenue against 12,000 dollars in spend. Incremental ROAS is 4.66x, not the 5.2x reported in Ads Manager. Close enough that scaling makes sense, but the team now knows the real number.

That is one channel, one campaign, one month. Repeated across the channel mix, the same methodology produces a brand-level map of which campaigns drive incremental revenue and which are reporting credit for revenue the brand would have earned anyway. Brands that build this discipline into their planning cycle compound the advantage every quarter.

Creative incrementality follows the same logic at a different layer. A new creative variant is "incremental" if it lifts performance above what existing creatives in the rotation would have delivered alone. If it just cannibalizes impressions from older winners, it adds nothing. This is also closely tied to creative fatigue, where ads decline in performance and need replacement with truly incremental variants.

AI-driven platforms like Hawky's element-level creative analysis prove their value by identifying which creative elements actually move the needle versus which are simply taking credit for impressions that were already converting.

How to get started with incrementality testing

You do not need a measurement team or a seven-figure budget to start. A 90-day plan, the right channel, and a clean test design will give you your first credible incrementality result.

Step 1: Pick your highest-spend, highest-suspicion channel. Branded search, retargeting, and Meta lookalike audiences are the three channels where reported ROAS most commonly diverges from incremental ROAS. Start where the gap is most likely to be large. The bigger the suspected gap, the more valuable the answer.

Step 2: Choose your test method. For brands with geo-distributed sales, use a geo-based holdout (turn off the channel in 5 to 10 matched markets and compare against control markets). For brands with strong audience targeting, use an audience-based holdout (split a custom audience and exclude one half). For channels with limited targeting (TV, podcast, CTV), use synthetic controls or matched-market designs. Google's incrementality testing documentation covers method selection in depth.

Step 3: Define hypothesis, duration, and success criteria upfront. Write down the expected lift, the confidence threshold, and the action you will take based on the result. If the test result is X, you will do Y. This commitment prevents post-hoc rationalization when the numbers are inconvenient.

Step 4: Run the test cleanly. Do not change anything mid-test: no creative refreshes, no budget shifts, no audience expansion. Contamination kills results. For a deeper view on creative-level testing discipline, see Hawky's guide on creative performance analysis.

Step 5: Act on the result. A test that does not change a budget decision is a wasted test. Reallocate based on the finding, then design the next test. Incrementality is a discipline, not a one-time project.

For brands building a unified 2026 measurement stack, layer in MMM after running 2 to 3 incrementality tests. The MMM model uses incrementality results as ground truth to calibrate channel-level coefficients. To pair incrementality testing with element-level performance visibility, see Hawky's Command Center.

Three real incrementality examples

Example 1: Branded search. A SaaS brand spent 40,000 dollars a month on branded search and reported a 22x ROAS in Google Ads. The team ran a 4-week geo holdout, pausing branded search in 8 mid-sized markets while keeping it on in matched control markets. Conversions in holdout markets dropped by 11 percent, not the 95 percent platform attribution implied. Incremental ROAS was closer to 3x. The brand cut branded search spend by 60 percent and reinvested in prospecting, recovering the 11 percent through paid social.

Example 2: Meta retargeting. A D2C beauty brand suspected its retargeting was over-credited. It split its 250,000-user retargeting audience in half, excluding the holdout from the campaign for 5 weeks. Incremental lift came in at 14 percent, against a reported ROAS that had implied a 60 percent contribution. The team kept retargeting but cut the budget by 40 percent and shifted spend to prospecting on Meta Advantage+, which had been undercrediting itself in attribution.

Example 3: Creative incrementality. A subscription fitness app launched a new video creative that, in attribution, looked like a top-3 performer in the account. An A/B test rotating the new creative against a control set of three winning evergreens revealed something different. The new creative was cannibalizing 70 percent of its conversions from the existing winners.

True incremental lift was only 4 percent. The team kept the creative in low rotation and shifted production budget toward variants of the existing winners, which were proven incremental drivers. This is the kind of insight that platforms with element-level creative analysis surface automatically.

Frequently asked questions

What is the difference between incrementality and attribution?

Attribution assigns credit for a conversion to a specific touchpoint based on the user journey. Incrementality measures whether the touchpoint actually caused the conversion or whether it would have happened anyway. Attribution answers "where did the credit go?" while incrementality answers "did this ad cause this sale?". The two often disagree, and incrementality is the more rigorous answer.

What is incremental ROAS?

Incremental ROAS is the return on ad spend calculated only on the revenue a campaign caused, excluding revenue that would have happened without it. It is calculated by dividing incremental revenue (revenue from the treatment group minus revenue from the control group) by total media spend. Incremental ROAS is typically 40 to 70 percent lower than platform-reported ROAS for retargeting campaigns.

How long does an incrementality test take?

A typical incrementality test runs 4 to 12 weeks. The exact duration depends on the channel, the conversion cycle, and the sample size needed for statistical significance. Tests shorter than 4 weeks rarely produce reliable results. Tests longer than 12 weeks are vulnerable to seasonal contamination and competitor activity.

Is incrementality testing the same as A/B testing?

No. A/B testing compares two creative or campaign variants against each other to see which performs better. Incrementality testing compares an exposed group against a non-exposed group to see whether the campaign drove any lift at all. A/B testing optimizes within a channel, while incrementality validates whether the channel is worth running.

Can incrementality testing work without holding back budget?

Not for true causal incrementality. Every credible method (geo holdout, audience holdout, ghost ads, synthetic controls) requires either withholding the campaign from a comparable audience or constructing a counterfactual from historical data. Methods that claim incrementality without any form of holdout are typically attribution rebadged, not true causal measurement.

What is a good incremental lift?

A "good" lift depends entirely on the channel and the cost. Incremental lift of 5 to 15 percent on a low-cost retargeting campaign can be highly profitable. Lift of 30 percent on an expensive prospecting channel may not be. The right benchmark is incremental ROAS against the brand's profit margin, not lift in isolation.

Closing

Incrementality is the difference between optimizing on what feels true and optimizing on what is true. Brands running paid media at scale in 2026 cannot afford to operate on platform-reported ROAS alone. The numbers are inflated, the inflation varies by channel, and the budget decisions made on top of them compound the error.

The path forward is not complicated. Pick a channel, design a clean test, run it for four weeks, and act on the result. Build the discipline test by test, then layer in MMM as the strategic frame. The brands doing this work today are the ones that will compound budget efficiency every quarter while their competitors keep scaling channels that look profitable on paper.

If creative incrementality is the layer you want to crack next, Hawky's element-level creative intelligence is built for that job. It identifies which hooks, visuals, and CTAs actually drive incremental performance versus which are simply riding traffic, cutting the guesswork out of creative rotation and budget allocation.

Ready to Stop Guessing and Start Winning with Creative Intelligence? Book Demo

Incrementality in marketing is the additional impact, or lift, that a campaign generates beyond what would have happened without it. Marketers measure it with controlled experiments comparing a treatment group exposed to the campaign against a holdout group that is not.

Platform attribution tells you where conversions happened. Incrementality tells you which of those conversions you actually caused. The gap between the two is large, growing, and increasingly the difference between profitable scale and burned budget. In April 2026, with privacy regulation, AI-driven measurement, and Meta's Incremental Attribution feature reshaping the stack, incrementality has moved from a quarterly research project to a core operating metric.

What is incrementality in marketing?

Incrementality in marketing is the measurable lift that a campaign, channel, or creative produces above a baseline of what would have happened without it. The "baseline" represents the conversions a brand would have earned through organic demand, brand recognition, retargeting overlap, or other channels running in parallel. Incrementality strips out that baseline and isolates the true causal contribution of the marketing activity.

The simplest way to think about it: every conversion in your dashboard belongs to one of two buckets. Bucket one is conversions that happened because of your ad. Bucket two is conversions that would have happened anyway. Incrementality is the science of separating those buckets.

Attribution platforms like Meta Ads Manager and Google Ads cannot do this on their own because they only see users who were exposed to the ad. They have no view of what an unexposed version of the same user would have done. That blindspot is why platform-reported ROAS consistently overstates campaign value.

The technical definition relies on counterfactual reasoning. The "counterfactual" is the alternative reality in which your ad never ran. Since you cannot observe that reality directly, incrementality testing constructs it through controlled experiments. A treatment group sees the ad, a control or holdout group does not, and the difference between their conversion rates is the incremental lift.

This concept used to live with data scientists at large brands. It is now mainstream because of two forces. Privacy regulation has degraded the cookie and device ID signals that powered multi-touch attribution for a decade.

AI and automated experimentation tools have made incrementality testing accessible to mid-market brands that could not afford a dedicated measurement team. The result is a measurement culture where "did it work" matters more than "where did the click come from".

Why incrementality matters for performance marketers

Incrementality matters because platform-reported ROAS systematically overstates the value of paid media. Research across DTC brands shows that incremental ROAS is typically 40 to 70 percent lower than reported ROAS for retargeting campaigns. For prospecting campaigns the gap narrows, with incremental ROAS landing closer to 70 to 90 percent of reported ROAS.

The takeaway is uncomfortable. A retargeting campaign showing 8x in Meta Ads Manager may be delivering closer to 3x in real, causal revenue.

That gap changes every budget decision a performance marketer makes. If a brand is scaling a channel based on inflated ROAS, it is overpaying for revenue it would have earned organically. If it is killing a top-of-funnel channel because attribution undercounts its impact, it is starving the pipeline. Without incrementality, optimization is happening on numbers that are wrong by a factor of two to five depending on the channel.

The 2026 measurement context makes this worse. Apple's privacy changes, Chrome's third-party cookie deprecation, and tightening consent rules have eroded the user-level signals attribution platforms depend on. Marketers who lean only on platform attribution are now optimizing against a signal that gets noisier every quarter. Incrementality, in contrast, runs on aggregate behavior and survives the privacy shift intact.

The shift is showing up in budget data. According to eMarketer's 2026 measurement trends report, almost half of US marketers (46.9 percent) plan to invest more in marketing mix modeling over the next year, and 36.2 percent are planning to invest more in incrementality methodology over the same period.

More than half (52 percent) of US brand and agency marketers already use incrementality testing as part of their measurement stack. Incrementality is no longer optional. It is the backbone of credible performance reporting, alongside core metrics like CPL, CTR, and conversion rate.

Key components of an incrementality test

Every incrementality test, regardless of method, shares the same five components. Understanding each is the difference between a test that produces a clean answer and one that wastes a month of budget on inconclusive results.

Component | What it does | Common failure mode |

|---|---|---|

Hypothesis | States the specific lift you expect to measure | Vague hypotheses ("does this work?") produce vague results |

Treatment group | The audience or geo exposed to the campaign | Group is too small to detect lift |

Control or holdout group | The matched audience or geo that is not exposed | Group is contaminated by other channels |

Test duration | The window over which lift is measured | Too short for the sales cycle, results are statistically insignificant |

Statistical significance threshold | The confidence level required before acting on results | Acting on directional but non-significant lift |

The hypothesis sets the test up. A clean hypothesis names the channel, the metric, the expected lift, and the action you will take if the lift hits. Example: "Pausing branded search in Boston for 4 weeks will reduce branded conversions by less than 10 percent, indicating low incrementality and supporting a 30 percent budget cut nationally." Without that specificity the test cannot fail or succeed cleanly.

The treatment and control groups are the heart of the test. They must be statistically comparable on every dimension that matters: audience size, demographics, baseline conversion rate, seasonality exposure, and parallel channel activity. The gold standard is randomized assignment at the user, geo, or audience level. Where randomization is not possible, synthetic control methods construct a comparable counterfactual using historical data.

Test duration must match the conversion window. A campaign with a 14-day consideration cycle needs at least four weeks of data to capture the full lift. Statistical significance is the final gate.

A test that reports a 12 percent lift with 60 percent confidence is not a result. It is noise. Marketers need 90 percent or 95 percent confidence before reallocating budget against the finding.

Incrementality vs attribution vs MMM

The three measurement frameworks answer different questions and work best when used together. Confusing them is one of the most common reasons measurement programs fail. Measured's incrementality vs MMM explainer lays out the differences in detail.

Framework | Question it answers | Time horizon | Granularity | Best for |

|---|---|---|---|---|

Attribution | Where did the click happen? | Real-time to 30 days | Campaign, ad set, ad, creative | Tactical optimization, daily decisions |

Incrementality | Did this campaign cause the conversion? | 2 to 12 weeks | Channel or campaign | Validating attribution, channel ROI |

Marketing Mix Modeling (MMM) | What is the long-term contribution of every channel and external factor? | 1 to 3 years of historical data | Channel, market, season | Budget planning, brand investment |

Attribution is fast but unreliable. It tells you the path the user took to convert, but it cannot tell you whether your ad caused the conversion or just got credit for one that was already going to happen. Multi-touch attribution made this worse by giving every touchpoint partial credit, including touchpoints that had no causal influence at all. For a deep look at how Meta's attribution model evolved, see Hawky's guide on mastering Meta's new attribution model.

Incrementality is rigorous but slow. A geo-based holdout test takes four to twelve weeks to produce a clean answer for one channel. You cannot run an incrementality test on every campaign every week. Incrementality earns its place by validating or rebutting what attribution tells you, not by replacing it.

MMM is the strategic frame. It takes 1 to 3 years of historical performance data and decomposes the impact of every channel, including channels you cannot directly measure (TV, OOH, podcast, organic). In 2026 MMM has become the default measurement framework for privacy-regulated industries because it requires no cookies, device IDs, or consent signals. Modern MMM is also AI-powered and integrates incrementality test results to calibrate the model.

The 2026 best practice is the unified measurement stack. MMM provides the top-down view of channel contribution. Incrementality calibrates the model and validates specific channels.

Attribution informs day-to-day tactical decisions inside the platforms. Each layer answers a different question. None of them works as well alone.

How incrementality works in practice

Picture a DTC apparel brand spending 200,000 dollars a month across Meta, Google, and TikTok. The performance team sees a 5.2x ROAS on Meta retargeting in Ads Manager. The CFO wants to scale Meta retargeting by 50 percent. Before approving, the team runs an incrementality test.

They split the customer email list into two matched audiences of 100,000 users each. The treatment group is included in the Meta retargeting custom audience. The holdout group is excluded for four weeks. Everything else stays constant: prospecting budgets, creative refreshes, email cadence, and Google Ads spend.

At the end of the four weeks, the team measures the conversion rate in each group.

The treatment group converted at 4.8 percent. The holdout group converted at 4.1 percent. The incremental lift is (4.8 minus 4.1) divided by 4.1, which equals 17 percent.

Multiplied across the 100,000 users in the treatment group at an average order value of 80 dollars, the campaign generated 56,000 dollars in incremental revenue against 12,000 dollars in spend. Incremental ROAS is 4.66x, not the 5.2x reported in Ads Manager. Close enough that scaling makes sense, but the team now knows the real number.

That is one channel, one campaign, one month. Repeated across the channel mix, the same methodology produces a brand-level map of which campaigns drive incremental revenue and which are reporting credit for revenue the brand would have earned anyway. Brands that build this discipline into their planning cycle compound the advantage every quarter.

Creative incrementality follows the same logic at a different layer. A new creative variant is "incremental" if it lifts performance above what existing creatives in the rotation would have delivered alone. If it just cannibalizes impressions from older winners, it adds nothing. This is also closely tied to creative fatigue, where ads decline in performance and need replacement with truly incremental variants.

AI-driven platforms like Hawky's element-level creative analysis prove their value by identifying which creative elements actually move the needle versus which are simply taking credit for impressions that were already converting.

How to get started with incrementality testing

You do not need a measurement team or a seven-figure budget to start. A 90-day plan, the right channel, and a clean test design will give you your first credible incrementality result.

Step 1: Pick your highest-spend, highest-suspicion channel. Branded search, retargeting, and Meta lookalike audiences are the three channels where reported ROAS most commonly diverges from incremental ROAS. Start where the gap is most likely to be large. The bigger the suspected gap, the more valuable the answer.

Step 2: Choose your test method. For brands with geo-distributed sales, use a geo-based holdout (turn off the channel in 5 to 10 matched markets and compare against control markets). For brands with strong audience targeting, use an audience-based holdout (split a custom audience and exclude one half). For channels with limited targeting (TV, podcast, CTV), use synthetic controls or matched-market designs. Google's incrementality testing documentation covers method selection in depth.

Step 3: Define hypothesis, duration, and success criteria upfront. Write down the expected lift, the confidence threshold, and the action you will take based on the result. If the test result is X, you will do Y. This commitment prevents post-hoc rationalization when the numbers are inconvenient.

Step 4: Run the test cleanly. Do not change anything mid-test: no creative refreshes, no budget shifts, no audience expansion. Contamination kills results. For a deeper view on creative-level testing discipline, see Hawky's guide on creative performance analysis.

Step 5: Act on the result. A test that does not change a budget decision is a wasted test. Reallocate based on the finding, then design the next test. Incrementality is a discipline, not a one-time project.

For brands building a unified 2026 measurement stack, layer in MMM after running 2 to 3 incrementality tests. The MMM model uses incrementality results as ground truth to calibrate channel-level coefficients. To pair incrementality testing with element-level performance visibility, see Hawky's Command Center.

Three real incrementality examples

Example 1: Branded search. A SaaS brand spent 40,000 dollars a month on branded search and reported a 22x ROAS in Google Ads. The team ran a 4-week geo holdout, pausing branded search in 8 mid-sized markets while keeping it on in matched control markets. Conversions in holdout markets dropped by 11 percent, not the 95 percent platform attribution implied. Incremental ROAS was closer to 3x. The brand cut branded search spend by 60 percent and reinvested in prospecting, recovering the 11 percent through paid social.

Example 2: Meta retargeting. A D2C beauty brand suspected its retargeting was over-credited. It split its 250,000-user retargeting audience in half, excluding the holdout from the campaign for 5 weeks. Incremental lift came in at 14 percent, against a reported ROAS that had implied a 60 percent contribution. The team kept retargeting but cut the budget by 40 percent and shifted spend to prospecting on Meta Advantage+, which had been undercrediting itself in attribution.

Example 3: Creative incrementality. A subscription fitness app launched a new video creative that, in attribution, looked like a top-3 performer in the account. An A/B test rotating the new creative against a control set of three winning evergreens revealed something different. The new creative was cannibalizing 70 percent of its conversions from the existing winners.

True incremental lift was only 4 percent. The team kept the creative in low rotation and shifted production budget toward variants of the existing winners, which were proven incremental drivers. This is the kind of insight that platforms with element-level creative analysis surface automatically.

Frequently asked questions

What is the difference between incrementality and attribution?

Attribution assigns credit for a conversion to a specific touchpoint based on the user journey. Incrementality measures whether the touchpoint actually caused the conversion or whether it would have happened anyway. Attribution answers "where did the credit go?" while incrementality answers "did this ad cause this sale?". The two often disagree, and incrementality is the more rigorous answer.

What is incremental ROAS?

Incremental ROAS is the return on ad spend calculated only on the revenue a campaign caused, excluding revenue that would have happened without it. It is calculated by dividing incremental revenue (revenue from the treatment group minus revenue from the control group) by total media spend. Incremental ROAS is typically 40 to 70 percent lower than platform-reported ROAS for retargeting campaigns.

How long does an incrementality test take?

A typical incrementality test runs 4 to 12 weeks. The exact duration depends on the channel, the conversion cycle, and the sample size needed for statistical significance. Tests shorter than 4 weeks rarely produce reliable results. Tests longer than 12 weeks are vulnerable to seasonal contamination and competitor activity.

Is incrementality testing the same as A/B testing?

No. A/B testing compares two creative or campaign variants against each other to see which performs better. Incrementality testing compares an exposed group against a non-exposed group to see whether the campaign drove any lift at all. A/B testing optimizes within a channel, while incrementality validates whether the channel is worth running.

Can incrementality testing work without holding back budget?

Not for true causal incrementality. Every credible method (geo holdout, audience holdout, ghost ads, synthetic controls) requires either withholding the campaign from a comparable audience or constructing a counterfactual from historical data. Methods that claim incrementality without any form of holdout are typically attribution rebadged, not true causal measurement.

What is a good incremental lift?

A "good" lift depends entirely on the channel and the cost. Incremental lift of 5 to 15 percent on a low-cost retargeting campaign can be highly profitable. Lift of 30 percent on an expensive prospecting channel may not be. The right benchmark is incremental ROAS against the brand's profit margin, not lift in isolation.

Closing

Incrementality is the difference between optimizing on what feels true and optimizing on what is true. Brands running paid media at scale in 2026 cannot afford to operate on platform-reported ROAS alone. The numbers are inflated, the inflation varies by channel, and the budget decisions made on top of them compound the error.

The path forward is not complicated. Pick a channel, design a clean test, run it for four weeks, and act on the result. Build the discipline test by test, then layer in MMM as the strategic frame. The brands doing this work today are the ones that will compound budget efficiency every quarter while their competitors keep scaling channels that look profitable on paper.

If creative incrementality is the layer you want to crack next, Hawky's element-level creative intelligence is built for that job. It identifies which hooks, visuals, and CTAs actually drive incremental performance versus which are simply riding traffic, cutting the guesswork out of creative rotation and budget allocation.

Ready to Stop Guessing and Start Winning with Creative Intelligence? Book Demo

Ready to Stop Guessing and Start Winning with Creative Intelligence?

Company

Alternatives

Ready to Stop Guessing and Start Winning with Creative Intelligence?

Company

Alternatives

Ready to Stop Guessing and Start Winning with Creative Intelligence?

Company

Alternatives